When Imitation Crosses the Line: What the Studio Ghibli AI Controversy Tells Us About the Future of Music

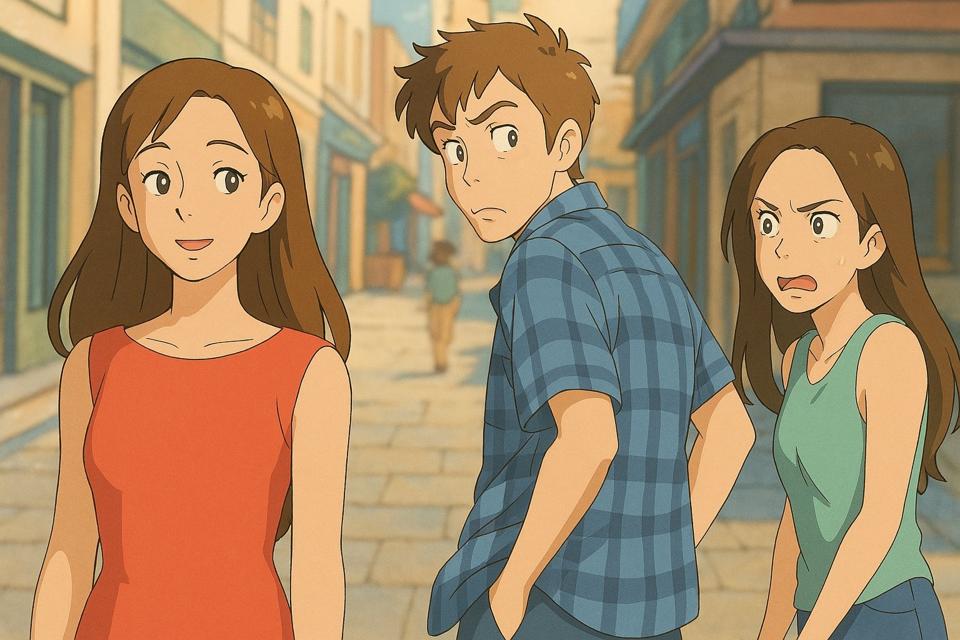

Earlier this year, the internet exploded with controversy when users of ChatGPT's image generation tool began uploading memes and personal photos to be transformed in the style of Studio Ghibli. Cats were reborn as characters from My Neighbor Totoro. Olympic athletes were reimagined as anime icons. The trend, quickly dubbed "Ghiblification," spread like wildfire across social media.

The Backlash from Creators

Studio Ghibli's founder, Hayao Miyazaki, has long voiced his disdain for AI-generated content, even calling it "an insult to life itself." While OpenAI has implemented guardrails to avoid copying the style of living artists, the Ghiblification trend demonstrated just how easy it still is to recreate and remix signature aesthetics without any credit or compensation to the original creators.

The backlash was swift. Artists and animators condemned the trend as a form of creative theft, arguing that AI models had been trained on Ghibli's decades of hand-drawn work without consent. The controversy forced a broader conversation about where inspiration ends and imitation begins in the age of generative AI.

What This Means for Music

Just as Studio Ghibli's distinctive visual language has been co-opted by generative AI models, musicians are seeing their sound, style, and identity mimicked without consent. AI models have access to massive datasets of commercial music, which they use to train algorithms capable of producing convincing imitations of specific artists, genres, and production styles.

The core issue remains the same across visual art and music: there is no requirement for consent, no system of remuneration, and no accountability. A generative AI tool can produce a track that sounds remarkably like a specific artist, and the creator of the original work receives nothing.

The Legal Gray Zone

Current copyright law was designed for a world where copying required deliberate human effort. AI-generated content occupies a legal gray zone. While directly reproducing a copyrighted recording is clearly infringement, generating new content that captures the "feel" or "style" of an artist exists in murkier territory.

Several high-profile cases are beginning to test these boundaries. Labels and publishers are filing suits against AI companies for using copyrighted music in training data without authorization. But the legal frameworks have not yet caught up with the technology.

The Scale of AI Music Generation

The volume of AI-generated music is growing exponentially. Platforms like Udio and Suno can generate convincing tracks in seconds. Some estimates suggest that tens of thousands of AI-generated tracks are being uploaded to streaming platforms daily, many of them deliberately imitating the styles of popular artists.

This creates a dual problem for rights holders: their original works are being used without consent to train AI models, and the resulting AI-generated content competes directly with their music on streaming platforms and in licensing markets.

Building Systems That Center Consent and Compensation

If we want to safeguard creativity in the AI age, we need to build systems that center consent and compensation. This means establishing clear rules about how copyrighted works can be used in AI training, creating mechanisms for rights holders to opt in or out, and ensuring that creators are fairly compensated when their work contributes to AI-generated output.

Technology also has a role to play on the detection side. Just as AI can generate content, it can also be used to identify when copyrighted material or distinctive artistic styles have been replicated without authorization.

How MatchTune Is Responding

MatchTune is working to empower music rights holders with the tools they need to detect and address AI-generated misuse. Through technologies like CoverNet, MatchTune provides AI-powered detection that can identify when a piece of music has been replicated, covered, or imitated without proper authorization.

The Studio Ghibli controversy is a warning sign for the entire creative industry. The question is not whether AI will continue to imitate human creativity, but whether we will build the safeguards needed to protect the creators whose work makes that imitation possible.